Workerbee -- SLAM Navigation Robot

This project explores how computer vision can be integrated into a complete robotic system that senses, interprets, and reacts to the physical world. The robot was developed around two primary modes: a ball tracking system for responsive visual following and a SLAM style mapping mode for localization and environmental modeling.

Rather than treating vision as an isolated feature, the project connects camera input, motion control, sensor fusion, and map generation into one workflow. The result is a system that can both respond to immediate visual targets and construct a broader spatial understanding of its environment.

Physical Design and Construction

Hardware design

The physical design of the robot was built around the need to support real time sensing, stable movement, and modular hardware integration. The chassis had to carry the Raspberry Pi, camera system, motor hardware, distance sensing components, and supporting power connections while still remaining compact enough to maneuver effectively.

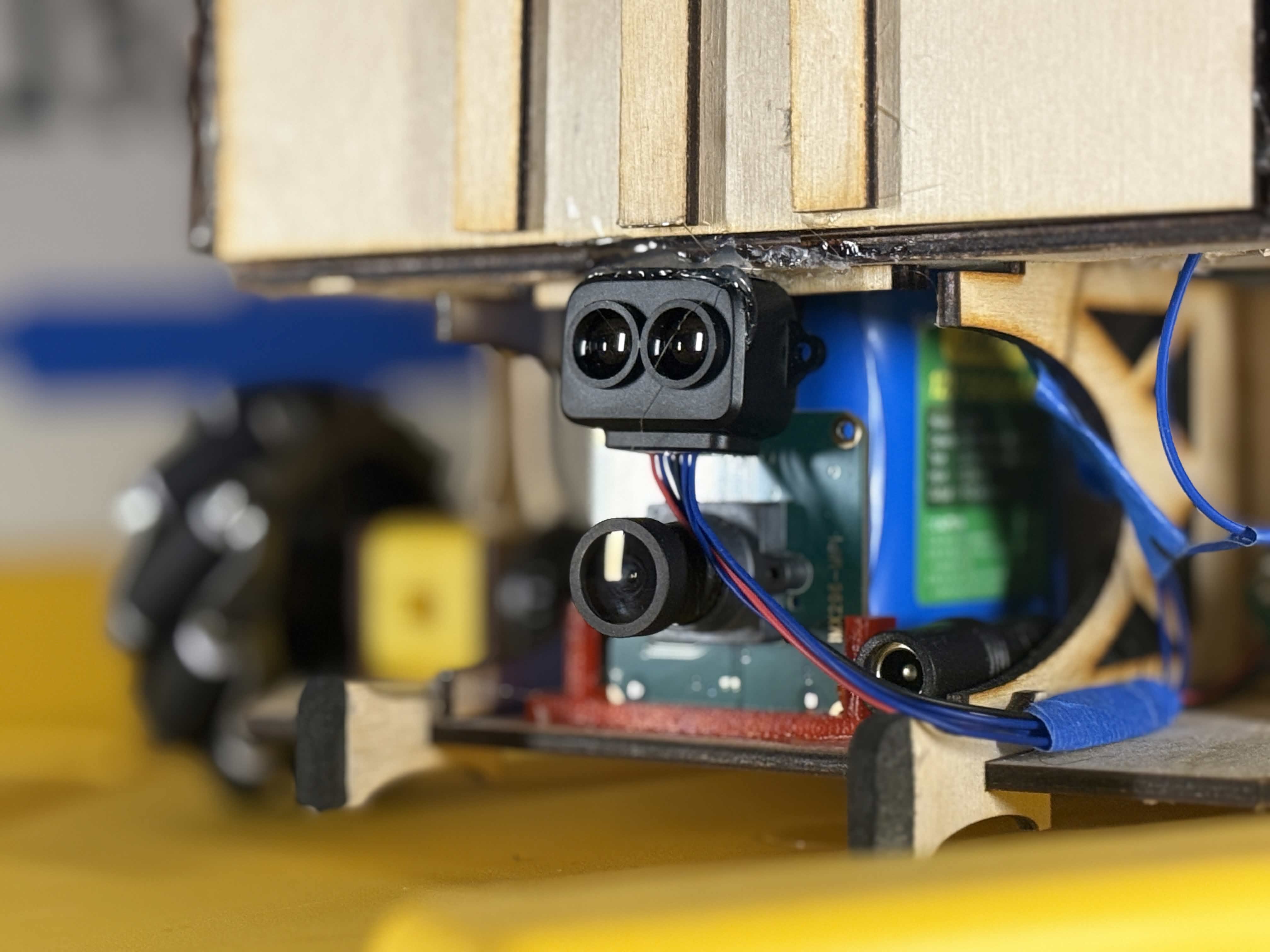

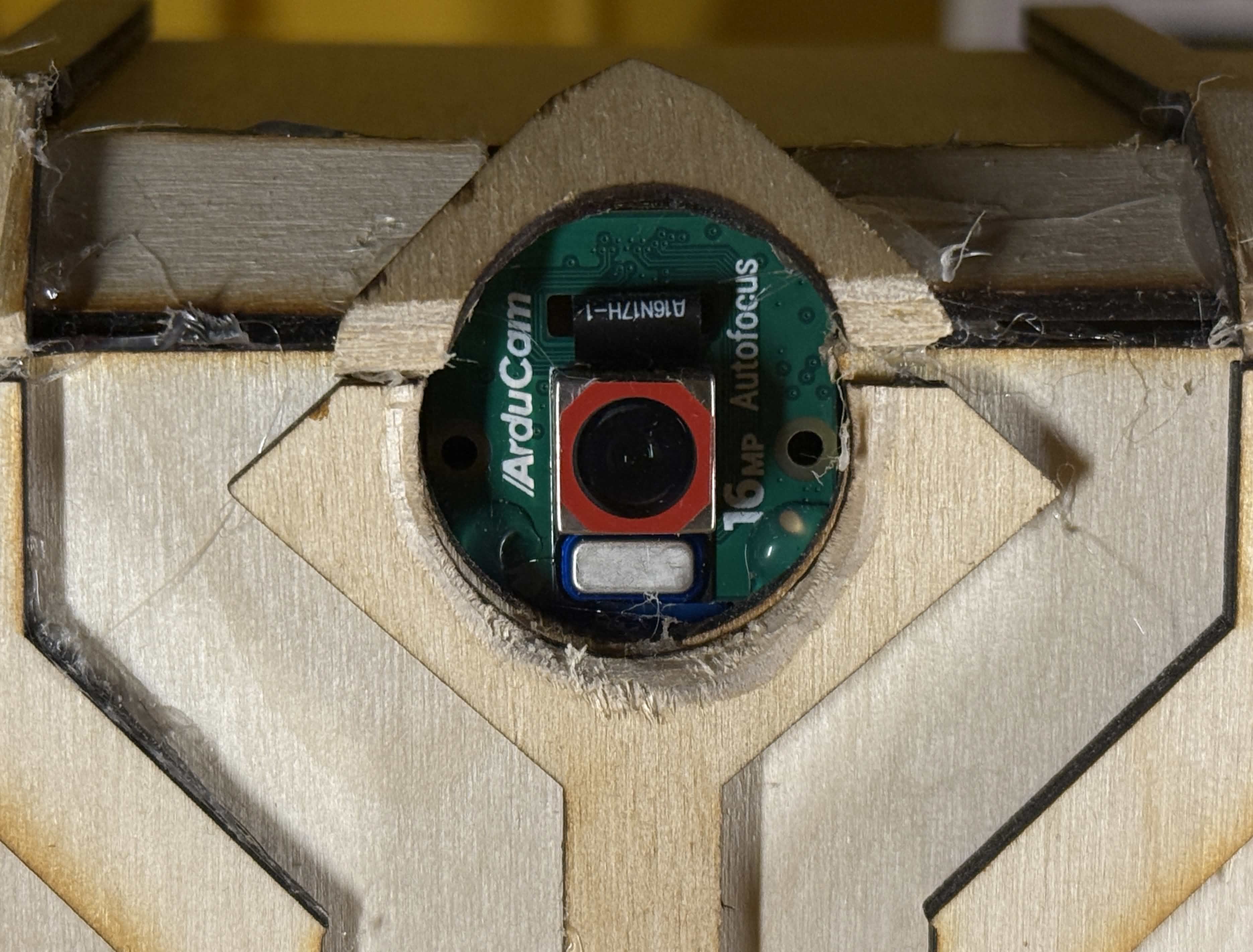

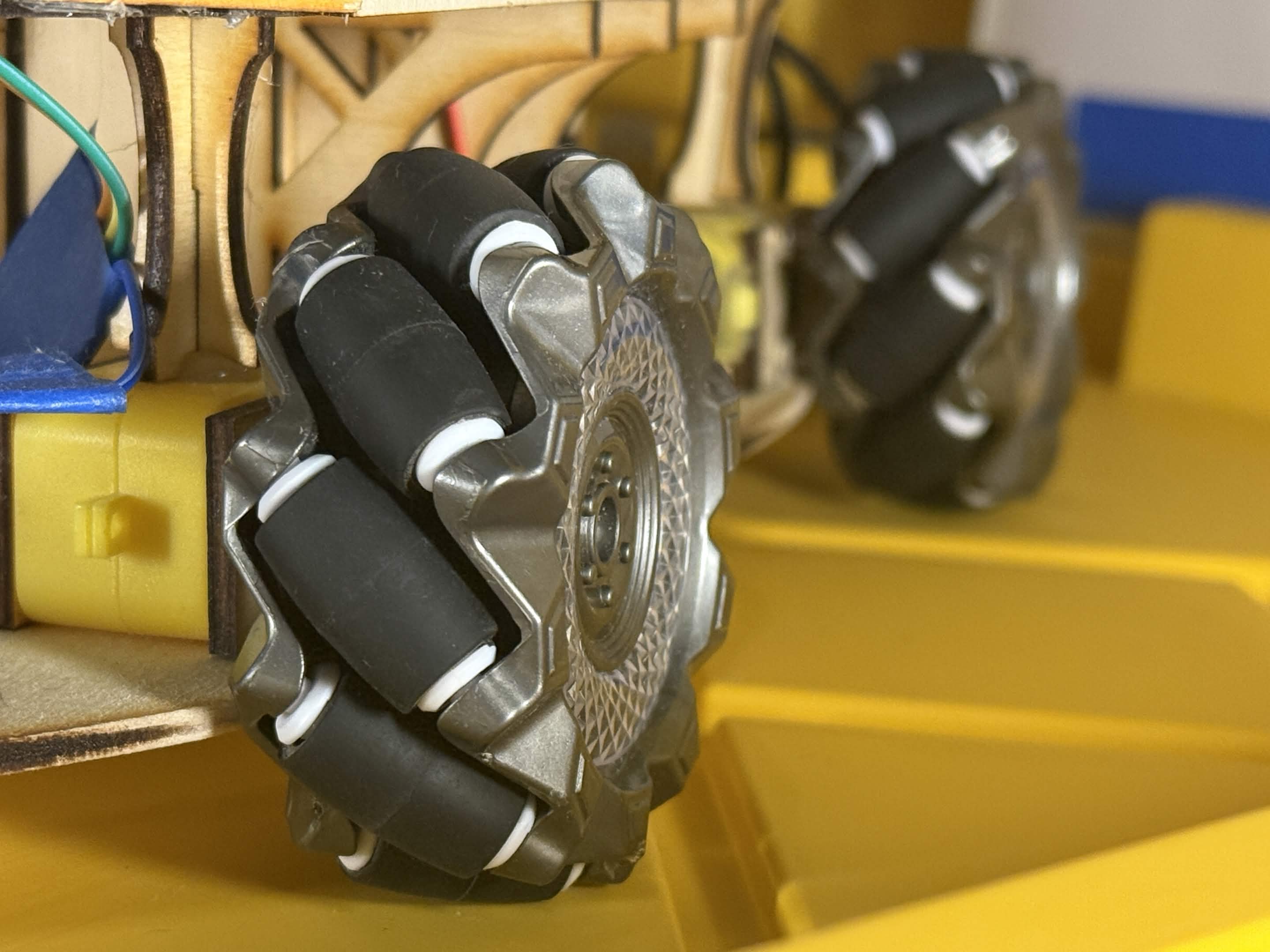

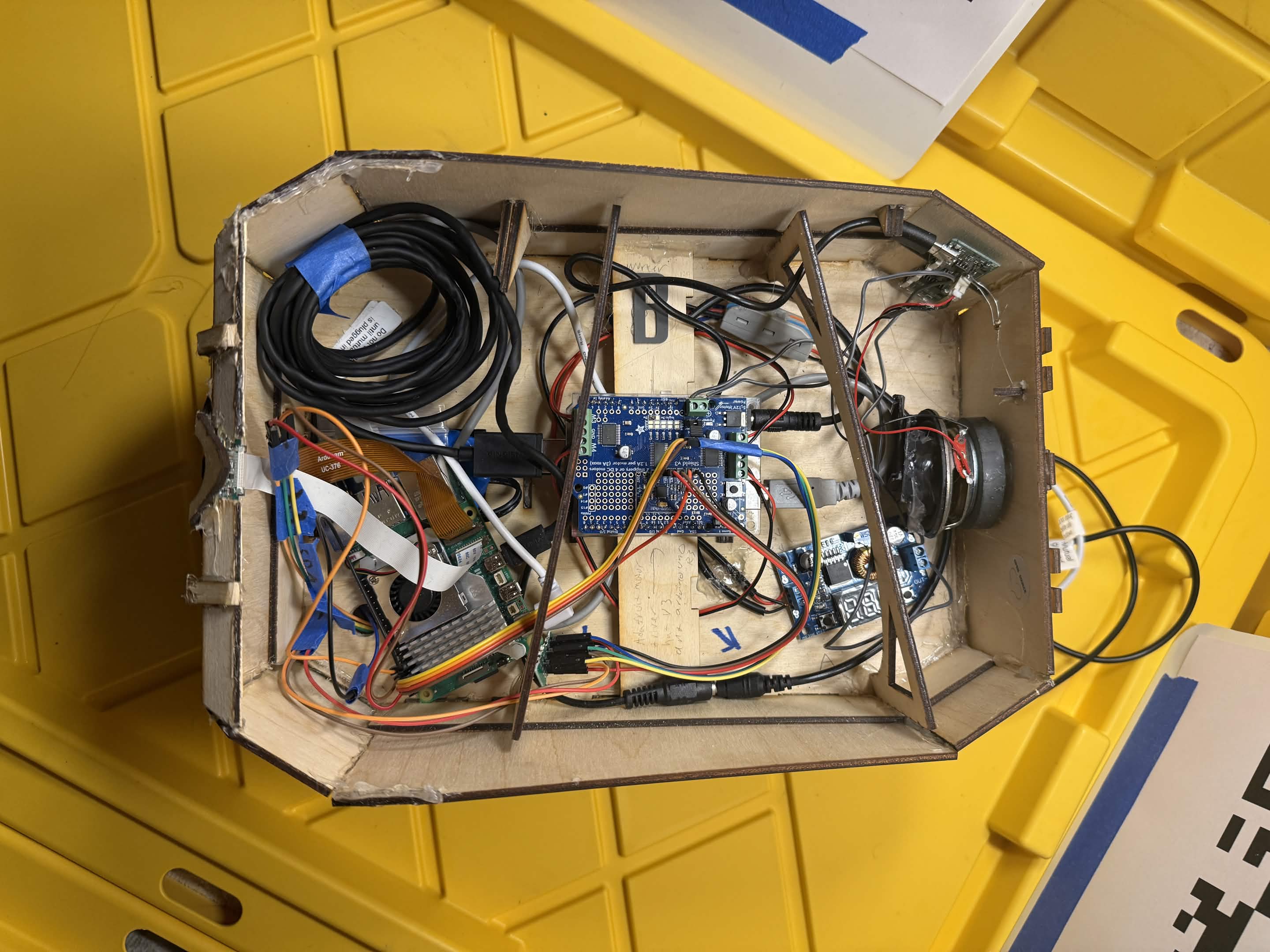

Construction focused on making the robot practical to test and revise. Sensor placement was especially important because camera angle, rotational stability, and distance sensor orientation all directly affected the quality of the tracking and mapping results. The mechanical layout therefore had to support both visibility and consistent motion. The parts for the body were constructed out of laser engraved wood designed on tinkercad. The design uses mechanum wheels to provide the platform for the capability of omnidirectional motion, allowing it to get itself out of corners and tight spaces. The brain of the project is a Raspberry Pi V 16Gb, running Debian Bookworm. Its percepts are two cameras one a 16MP autofocus rolling shutter color, and the other a 2MP manual focus global mono. Its other percepts include a Microphone(unused currently), an 3 axis accelerometer, and a single point LiDAR detector. The aforementioned components are powered by a 32000mAh (2.4A max draw per port)lithium ion portable charger. The robot also runs an arduino Uno R3 with an Adafruit motor driver hat, which powers and controls the direction and speed of each of the four wheel motors individually, these are powered by a 5600 mAh lithium ion battery (5A max output). This separate power system shares a ground with the battery pack, and the arduino is connected via USB to the Raspberry pi running a serial protocol I custom built for this project. The two systems are isolated from eachother to prevent noise from the motors, and to allow the "thought" end of the robot to be unaffected by voltage drops caused by the motors at stall current, which saves the more delicate electronics from damage. The project has the capability add a laser odometer when needed.

Another major consideration was accessibility during development. Because the project required repeated debugging and rewiring, the design needed to make components easy to reach, replace, and reposition. The entire top of the robot opens so that the electronics bay can be upgradable. This made the robot not only a software platform, but also a hardware prototype that evolved alongside the code. Additionally, the ease of acess roof may be replaced in the future with other modules, such as an arm.

Project Modes

Ball Tracking

The ball tracking system demonstrates how the robot uses computer vision to detect, locate, and react to a moving visual target in real time. A live camera feed is processed frame by frame to isolate the ball from the background, estimate its center, and measure how far it is from the middle of the image.

That visual offset becomes a control signal. If the ball appears left of center, the robot turns left. If it appears right, the robot turns right. When the target is aligned, the robot can advance or hold its position depending on the active control logic. This creates a closed loop system where perception directly influences motion. This was done using a classical computer vision approach

The main challenge in this mode was reliability under real world conditions. Lighting variation, cluttered backgrounds, and motion blur all made consistent detection more difficult. Another issue was preventing jitter and oscillation caused by small fluctuations in the detected target position. This was exadurated by the communication delay between the Raspberry Pi and the Ardunio, which caused multiple redesigns. Filtering and command pacing were critical to making the behavior smooth enough to be usable. Below is some examples of it working, and the program flowchart.

SLAM Mode

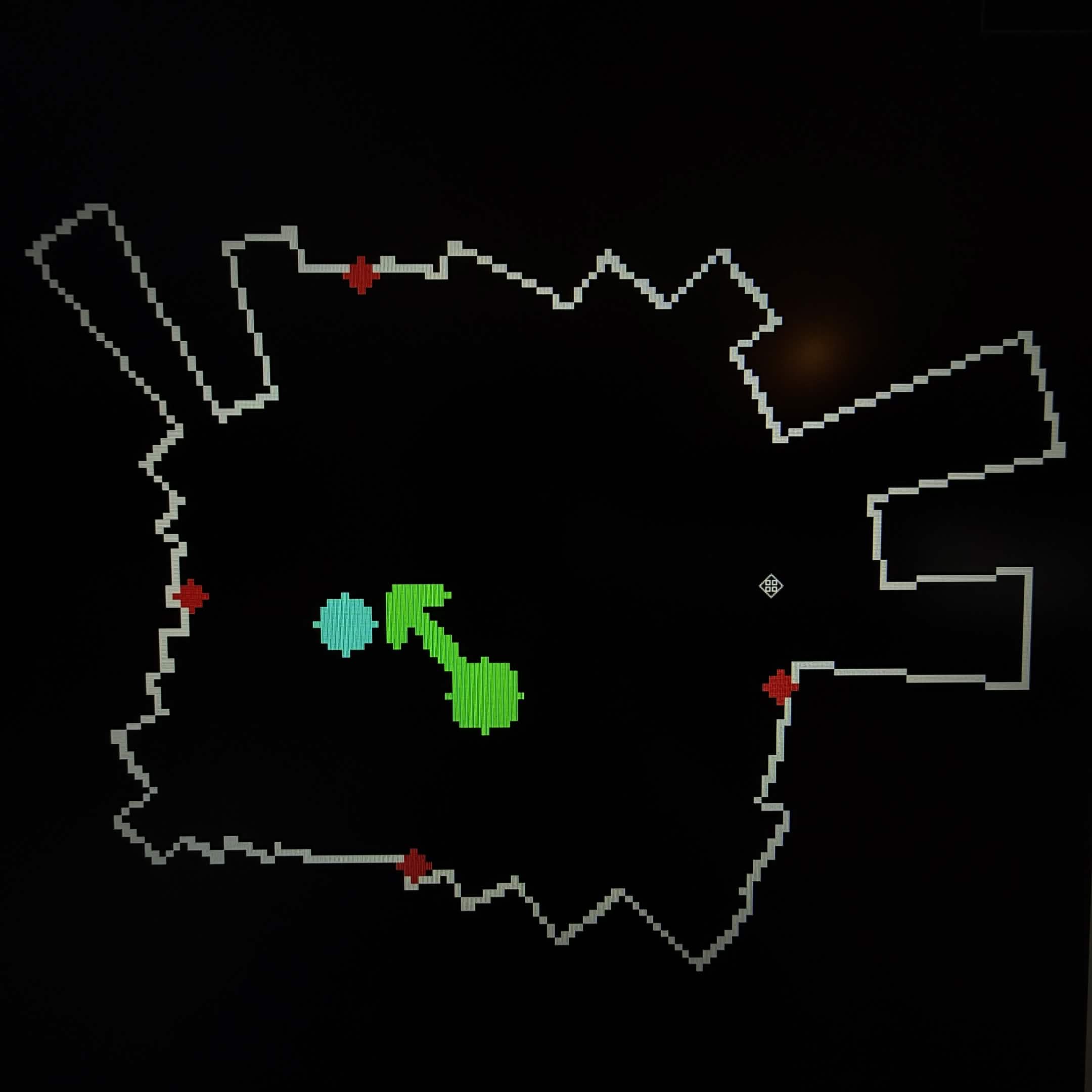

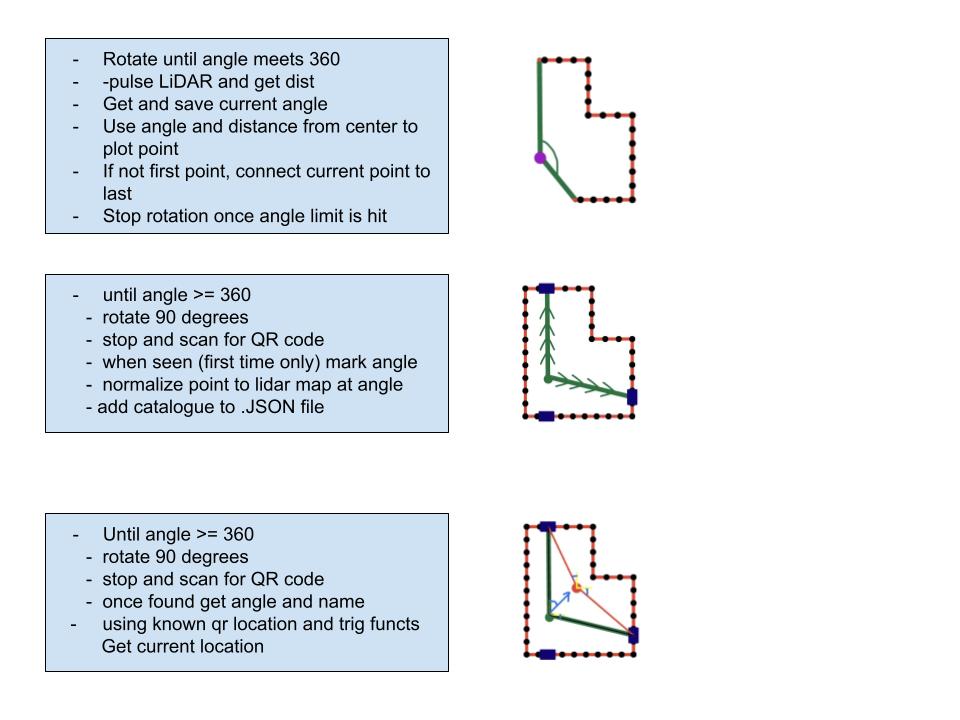

The SLAM mode was built to let the robot observe its surroundings, create a 2D map, and estimate its position within that map. During a scan, the robot rotates while collecting structural information from distance sensing and landmark information from the camera, including QR code detections that can serve as recognizable reference points.

Each measurement is transformed from sensor space into map coordinates and stored in an occupancy style grid. Over time, the robot builds a representation of nearby walls, obstacles, and landmarks. The gyroscope supports this process by estimating heading during rotation so incoming readings can be placed in the correct direction.

This mode required careful sensor fusion. Small heading errors, sign mistakes in angle math, or unstable turning could mirror the map or shift points into incorrect positions. I went through multiple ways of mapping the QR codes to the map so that they were reliable enough to use for localization. Originally I had printed QR codes that were exactly 7.35 x 7.35 inches in size and was using the keystoning and corner to corner distance in pixels to calculate the distance and angle of them, but this angle had serious issues to combat, such as lense-image distortion and glare. Qr codes would be reliably tracked in the center of the frame but be heavily skewed as they approched the edges of the frame. The current method of QR tracking only locates the QR code using angles and normalization, meaning that from the centerpoint, the intersection between the line formed by the angle of offset and the line of inferred wall location denotes the position. This allowed for a more reliable scanning method, that anchored QR codes to known walls. It pushed the hardware harder than the ball tracking mode because mapping, camera processing, and motor control were all competing for time and compute resources at once, so compromises had to be made. Due to limited processing power I opted for a lesser lidar polling rate and a "live" connect the dot method, opposed to a post processing model that was only slightly faster in favor of lower complexity. Below on the right is the program flow used for this.

Engineering Challenges

Sensor Noise and Drift

Gyroscope drift, noisy distance measurements, and inconsistent motor behavior could shift plotted points and distort the final map. Improving scan structure and heading management was necessary to keep localization and mapping usable.

Real Time Performance

Running camera processing, mapping, and motion control together on the Raspberry Pi introduced performance limits. The workflow had to be reorganized so different scan phases emphasized either distance collection or visual landmark capture.

Coordinate Consistency

Camera detections, robot heading, and map coordinates all had to agree. Small sign or angle errors could mirror the environment or place landmarks on the wrong side of the robot, which made debugging both mathematical and visual.

Control Stability

Frequent or poorly timed motor commands caused unstable turning and reduced tracking quality. Motion logic had to be smoothed so the robot reacted predictably instead of constantly overcorrecting.

Why This Project Matters

This work demonstrates more than object detection or mapping in isolation. It shows how computer vision can operate as part of a broader autonomous robotics pipeline where sensing, control, and environment modeling depend on each other.

The ball tracking mode emphasizes responsive visual feedback and real time control. The SLAM mode emphasizes localization, mapping, and multi sensor integration. Together, they represeat the practical challenges and engineering value of building robotics systems that must function in the real world rather than in ideal conditions.